AI for X-Ray Scans A Double-Edged Sword in Medicine

Artificial intelligence offers superior speed and accuracy in analyzing medical scans. This technology can lead to earlier disease detection, improving patient outcomes. AI systems, however, carry significant risks like misdiagnosis from algorithmic bias. Critical concerns also exist regarding patient data privacy and accountability when an ai x ray system makes a mistake.

| Metric | AI (CheXNeXt, Stanford, 2018) | Human Radiologists |

|---|---|---|

| Pathologies Diagnosed | Performed as well as or better for 11 out of 14 pathologies | Baseline for comparison |

| X-ray Scan Time (420 X-rays) | 90 seconds | Approximately 3 hours |

The Promise: How AI Improves Diagnostic Accuracy

Artificial intelligence is not just a theoretical concept in medicine; it is a practical tool that delivers measurable improvements in diagnostic accuracy. By leveraging immense computational power, AI algorithms analyze medical images with a speed and consistency that surpasses human capability. This enhancement promises to transform radiology, making diagnoses faster, more precise, and ultimately more effective for patient care.

Faster and More Efficient Analysis

One of the most immediate benefits of AI in radiology is its incredible speed. A human radiologist might spend hours meticulously reviewing hundreds of scans. An AI system can process the same workload in minutes. This efficiency extends beyond image analysis and positively impacts the entire clinical workflow. Healthcare organizations now use AI to streamline operations in several key ways:

- Optimizing Patient Schedules: AI systems analyze appointment data and resource availability to create efficient schedules, significantly reducing patient wait times from weeks to days in some cases.

- Enhancing Resource Coordination: AI platforms digest data from electronic health records to identify the best timing for surgeries and discharges, preventing bottlenecks and smoothing out patient transitions.

- Automating Administrative Tasks: Repetitive clerical work can be automated, freeing up medical staff to focus on more critical, patient-facing activities.

Companies like Aidoc and Zebra Medical Vision have demonstrated this impact in real-world settings. By automatically flagging critical conditions like intracranial hemorrhages on CT scans, their systems have reduced the time to diagnosis by over 20%, enabling faster clinical responses in life-threatening emergencies.

Detecting What the Human Eye Might Miss

Beyond speed, AI possesses a unique ability to detect subtle patterns that are difficult or impossible for the human eye to perceive. Radiologists, despite their expertise, can be affected by fatigue or visual limitations, leading to missed findings. AI algorithms, however, are trained on vast datasets and can identify minute abnormalities with unwavering consistency.

AI systems are particularly effective at identifying:

- Barely perceptible lung nodules indicative of early-stage cancer.

- Hairline fractures or small cortical breaks that are easily overlooked.

- Faint pleural lines that may signal a collapsed lung.

- Subtle signs of bone degeneration or osteoporosis.

Tools like Radiobotics’ RBfracture, now approved for use in the UK's NHS, have shown they can improve the diagnostic sensitivity for fractures from 74% to 83% without increasing false positives. This capability helps address the persistent challenge of missed fractures and enhances diagnostic confidence.

Enabling Earlier Disease Detection

The combination of speed and precision directly translates into the most crucial benefit: earlier disease detection. Identifying conditions like cancer or osteoporosis in their initial stages dramatically improves patient outcomes. A growing number of AI tools have received regulatory approval and are being deployed for this very purpose.

- Computer-Aided Detection (CAD) systems have been in use since the 1990s to help find lung nodules and assist in mammography.

- ScreenPoint’s Transpara and iCAD’s ProFound AI are modern tools that automatically highlight suspicious masses on mammograms, serving as a second pair of eyes for radiologists.

- The Mirai risk model analyzes a patient's mammogram to predict long-term breast cancer risk, allowing for personalized screening schedules.

- BoneXpert is an established tool that quantifies bone age from pediatric hand X-rays, aiding in the diagnosis of growth disorders.

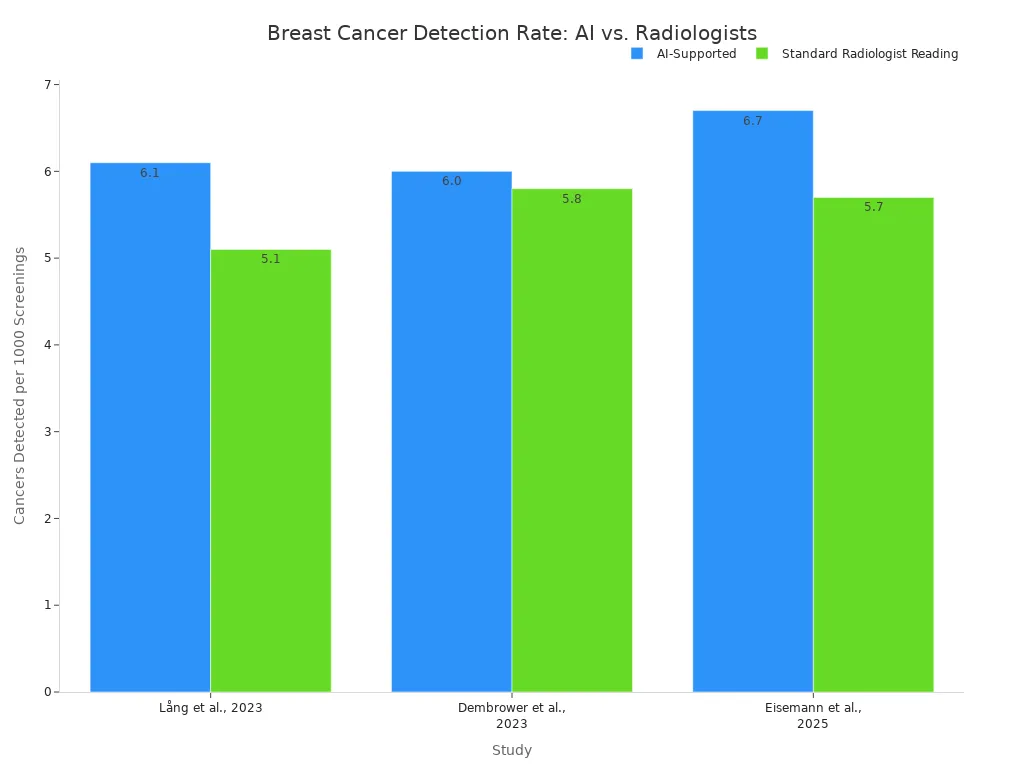

Recent studies confirm that an ai x ray system can match or even exceed the performance of human experts. Research comparing AI-supported screening to traditional double reading by two radiologists has shown compelling results. For example, one major study found that AI-supported screening detected more cancers (6.1 per 1,000 women vs. 5.1) while reducing the human workload by 44%. Another trial observed a 17.6% higher cancer detection rate with AI support.

These findings demonstrate that AI is not just a promising technology for the future; it is already a powerful ally in the present-day fight against disease, helping clinicians find cancers earlier and with greater accuracy.

The Peril: Risks of Using AI for X-Ray Scans

While the potential of AI in radiology is immense, its deployment is fraught with significant perils. These systems are not infallible. They introduce new categories of risk that challenge traditional medical and legal frameworks. Overlooking these dangers could lead to patient harm, erode trust in medical technology, and deepen existing health inequities. A clear-eyed assessment of these risks is essential for responsible innovation.

The Danger of Algorithmic Bias

The most pressing danger in medical AI is algorithmic bias. An AI model is only as good as the data it learns from. If the training data is not representative of the general population, the algorithm will develop blind spots, leading to systematic errors for certain groups. Regulatory bodies like the FDA highlight that machine learning systems can learn incorrectly, make wrong inferences, and introduce biases that are not covered by traditional product development.

This bias often originates from the datasets themselves. An AI model trained on images primarily from one demographic group will naturally perform worse on underrepresented populations. Studies have confirmed this alarming trend. AI algorithms for chest X-ray analysis show systematic underdiagnosis in specific subpopulations, including:

- Female patients

- Black and Hispanic patients

- Patients with lower socioeconomic status (e.g., Medicaid insurance)

This disparity means a Black patient, for example, is more likely to receive a false negative from an AI algorithm than a white patient. A 2024 study underscored this problem, finding that chest X-ray models trained at one hospital saw their diagnostic performance drop by up to 20% when used on data from other institutions. This reveals how hidden biases in local data can prevent an AI from working effectively elsewhere.

These biases can manifest in several ways, creating a significant risk of misdiagnosis and worsening health disparities.

| Bias Type | How It Harms Patients |

|---|---|

| Demographic Bias | An AI trained mostly on fair-skinned individuals may fail to detect skin cancer on darker skin tones. Similarly, a model trained on data from urban hospitals with advanced equipment may perform poorly in rural clinics with different machines. |

| Annotation Bias | Radiologists can disagree on the exact boundaries of a tumor. An AI trained on annotations from only one group of experts may learn their specific interpretive habits, leading to inaccurate results when judged by other clinicians. |

| Selection Bias | If a dataset for an ai x ray tool only includes images from patients who already have a confirmed diagnosis, the model may struggle to identify the absence of disease in healthy individuals, leading to high rates of false positives. |

The "Black Box" Problem and Accountability

Many of the most powerful AI models operate as "black boxes." They take an X-ray image as input and produce a diagnosis as output, but their internal decision-making process is incredibly complex and opaque. Even the developers who build them cannot always explain precisely why the model arrived at a specific conclusion. This lack of transparency creates a critical problem for clinical adoption and legal accountability.

When an AI tool contributes to a misdiagnosis, who is at fault? The opaque nature of these systems makes it incredibly difficult to assign responsibility. This uncertainty creates a legal and ethical minefield for healthcare.

Clinicians are hesitant to trust a recommendation they cannot understand. Research shows that doctors do not necessarily need to know the complex math behind a model. Instead, they want practical, relevant information like confidence scores and clear reasoning for a diagnosis to make an informed clinical decision. Without this, the AI remains a mysterious tool rather than a trusted partner. This accountability gap involves several parties:

- AI Developers: They could face product liability claims if a flawed algorithm is considered a "defective product."

- Healthcare Systems: Hospitals may be held responsible for the tools they deploy under the principle of vicarious liability.

- Physicians: A radiologist could be liable for medical malpractice if their reliance on an AI tool falls below the accepted standard of care.

Current legal frameworks are struggling to adapt. In the United States, liability is often debated under existing laws for medical malpractice and product liability. The European Union is actively developing new directives to address AI liability, but even these may not fully resolve the issue for "black box" systems. This leaves clinicians and patients in a precarious position, navigating a new technological frontier without a clear map of responsibility.

Data Privacy and Security Vulnerabilities

AI systems in radiology require access to vast amounts of sensitive patient data, including images and electronic health records (EHRs). This centralization of data creates a high-value target for cybercriminals and introduces new security vulnerabilities that can compromise patient privacy and safety. (This represents a significant biohazard-level risk)

The risks extend beyond traditional data theft. AI systems themselves present unique weak points that malicious actors can exploit. Key vulnerabilities include:

- Training Data Leakage: AI models can sometimes "memorize" specific details from their training data. If the data is not perfectly anonymized, the model could inadvertently reveal protected health information (PHI) when making a prediction.

- Prompt Injection: Patient-facing AI interfaces, like symptom-checker chatbots, can be manipulated. Attackers can use carefully crafted inputs (prompts) to trick the AI into bypassing its safety protocols and revealing confidential system information.

- Ransomware and Malware: Cybercriminals can target AI systems to disrupt hospital operations, holding critical diagnostic tools hostage until a ransom is paid.

Perhaps most concerning is the threat to patient anonymity. While data is typically anonymized before being used for training, advanced AI techniques can reverse this process. A study in Scientific Reports demonstrated that a deep learning system could successfully re-identify patients directly from their chest X-ray images. This finding shatters the assumption that medical images are inherently anonymous and highlights a profound privacy risk in the age of AI.

The Human Factor: AI as a Tool, Not a Replacement

The integration of AI into radiology is not about replacing human experts but augmenting their capabilities. This technology holds the potential to alleviate significant workplace pressures. However, it also introduces new challenges related to professional skills and the necessity of human oversight. The goal is to create a partnership where technology supports, rather than supplants, clinical judgment.

Reducing Radiologist Burnout

Radiologist burnout is a critical issue. Post-pandemic, burnout rates among cardiothoracic radiologists reached 88%, with 80% of respondents feeling their workload was at or above capacity. AI offers a practical solution by automating tedious, repetitive tasks that contribute to fatigue. Tools can perform routine measurements and calculations in seconds, freeing clinicians from manual data entry. An ai x ray system can also handle background tasks like correcting dictation or reformatting reports. This automation reduces cognitive load, allowing radiologists to focus their mental energy on complex interpretations and critical decision-making. (This symbolizes the cognitive effort involved)

The Risk of De-skilling Professionals

While AI can reduce burnout, over-reliance on it poses a significant risk to the profession. Medical education experts warn about the potential for "deskilling," where foundational clinical skills erode over time. A study involving colonoscopies showed that physicians' skills declined after relying on AI, making them less effective when the tool was removed. This highlights several concerns for radiology:

- Automation Bias: A tendency to over-trust AI, leading to missed findings if the algorithm fails.

- Upskilling Inhibition: Trainees may get fewer opportunities to learn core skills if AI consistently performs the task for them.

- Loss of Pattern Recognition: Radiologists could lose the ability to formulate diagnoses from first principles if they become too dependent on AI signals.

Emphasizing Human Oversight

The solution to these risks lies in emphasizing a collaborative human-AI model. AI should function as a sophisticated tool under the firm control of a human expert. This approach ensures patient safety while leveraging the technology's strengths.

The true value of an ai x ray tool is realized when it serves as a 'second set of eyes,' not the primary decision-maker. This hybrid strategy improves outcomes by streamlining workflows, reducing workloads by nearly 40% in some areas, and flagging critical findings for immediate attention.

Ultimately, this model improves diagnostic confidence and allows for faster reporting. It frees radiologists to focus on complex cases and patient interaction, reinforcing their indispensable role in healthcare.

The Future: Striking the Right Balance with AI X Ray Tech

The future of AI in radiology depends on a balanced approach. This approach must harness technological power while establishing strong safeguards. Success requires clear regulations, effective human-AI collaboration, and a commitment to continuous improvement. This ensures that technology serves patients and clinicians safely and ethically.

Developing Robust Regulations

Governments are creating new rules for medical AI. The FDA, for example, often regulates AI software as a "Software as a Medical Device" (SaMD). Its level of oversight depends on the software's intended use and potential risk to patients. To avoid strict regulation, a tool must support a healthcare provider's decisions without replacing their independent judgment. The FDA's action plan for AI/ML software promotes transparency and good machine learning practices. It requires developers to submit a change control plan, outlining how an algorithm will be updated while ensuring it remains safe and effective.

The Collaborative Human-AI Model

The most effective model for the future is a partnership between humans and AI. In this model, the AI functions as an assistive tool, not a replacement. Radiologists use the AI's findings to verify their own preliminary diagnosis. This collaborative workflow respects professional autonomy and improves efficiency. A successful integration requires:

- Seamless integration into existing software to minimize disruption.

- A clear, human-readable interface that meets clinical needs.

- Clear guidelines on how to use the AI tool optimally.

- Policies that respect a clinician's final decision-making authority.

Continuous Training and Auditing

AI models are not static. They require constant oversight to ensure they perform accurately and fairly over time. Industry standards like the NIST AI Risk Management Framework provide a roadmap for governing and managing AI risks. Continuous auditing is essential to detect and correct issues like algorithmic bias or performance degradation.

The goal of continuous auditing is to monitor real-world performance, identify biases across different patient groups, and manage model updates through a controlled process.

This ensures an ai x ray tool remains reliable long after its initial deployment. Regular monitoring and retraining help maintain trust and guarantee that the technology adapts to changing clinical needs and data.

AI in radiology is a powerful but imperfect tool. Its true value lies in augmenting, not replacing, the expertise of human radiologists. A successful future depends on a collaborative approach that maximizes benefits while actively managing risks to ensure patient safety and ethical standards. Experts envision a future where:

- AI becomes an invaluable tool, enhancing radiologists' capabilities.

- Multimodal AI delivers highly individualized medicine.

- Radiologists lead in creating tools that transform the practice.

FAQ

Will AI completely replace radiologists?

No. AI functions as an assistive tool to augment a radiologist's expertise. It improves efficiency and accuracy, but human oversight remains essential for patient safety and final decisions.

Is AI for X-rays safe to use now?

Yes, with proper oversight. Regulatory bodies like the FDA approve these tools. Continuous auditing and human supervision are necessary to manage risks like algorithmic bias and ensure patient safety.

How does an AI learn to read X-rays? (This symbolizes the cognitive effort involved)

Developers train AI models on vast datasets of labeled X-ray images. The algorithm learns to identify patterns associated with different medical conditions, mimicking the diagnostic process of human experts.

See Also

Top Chinese Manufacturers for Acquiring Quality X-Ray Inspection Equipment

Understanding Pharmaceutical Checkweighers: Key Features and Core Definitions

Boosting Pharmaceutical Efficiency with Advanced Capsule Decapsulation Technology

WT20 Thoriated Tungsten Electrodes: Enhancing TIG Welding Performance Significantly

Essential Capsule Checkweighers for Prospective Buyers to Evaluate in 2025